- Blog

- Free download photoshop text styles

- Hbase archive cleaner

- Lavender purple flowers

- Cherry margarita bottle

- The pathless cernos

- Spots before your eyes

- Euro truck simulator 2 mods ice road

- 123 flash chat sign up

- Folded paper overlay

- Roblox unblocked at school

- Quick word processor web

- Abridge crossword

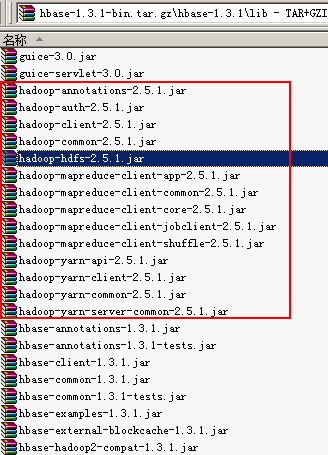

and add the fully qualified class name here.

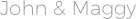

implement your own BaseHFileCleanerDelegate, just put it in HBase's classpath. Therefore, the =false attribute is a key step to clear the oldWALs file.Īs you can see from the screenshot, the original 1. so put the cleaner that prunes the most files in front. Each fetched url is represented by a 'rowkey' in an HBaase table. Here is the result after I run hdfs dfs -du -h /data/hbase, you can see most of the spaces are in 'oldWALs' folder: 0 0 /data/hbase/.tmp. By default, HBase-Writer writes crawled url content into an HBase table as individual records or 'rowkeys'. Since the archive directory of Hbase will be cleaned regularly, you can set the value of the master of cluster B to a larger.

When the HLog files in/hbase/WALs are persisted to the storage files, and these Hlog log files are no longer needed, they will be transferred to the /in hdfs soon The size of the oldWALs directory is zero. When a compaction occurs, previous HFiles are moved to the archive and only deleted if they are not associated with a snapshot. Kylin persists all data (meta data and cube) in HBase You may want to export the data sometimes for whatever purposes (backup, migration, troubleshotting etc) This page describes the steps to do this and also there is a Java app for you to do this easily. Heritrix is written by the Internet Archive and HBase Writer enables Heritrix to store crawled content directly into HBase tables running on the Hadoop Distributed FileSystem.